Weekly Reflection 3- AI, Learning, and Teaching

My introduction to AI goes back to the first time I heard Siri on my sister’s phone. I remember joking around with it and not taking it very seriously. At that time, it felt more like a novelty than anything meaningful. AI as a deeper and more serious concept came to me later through the book Life 3.0: Being Human in the Age of Artificial Intelligence by Max Tegmark. I really enjoyed reading it, and several ideas stayed with me, especially the opening scenario that imagines a near-utopian future shaped by advanced AI, as well as the discussion around job replacement. That part felt especially relevant when thinking about education and the future of teaching.

What stands out to me now is not the utopia described in the book, but how fast AI has entered everyday conversations and learning spaces. Without exaggeration, almost every course in our program now mentions AI, includes readings about it, or dedicates at least one session to discussing it. The speed of this shift feels significant and overwhelming.

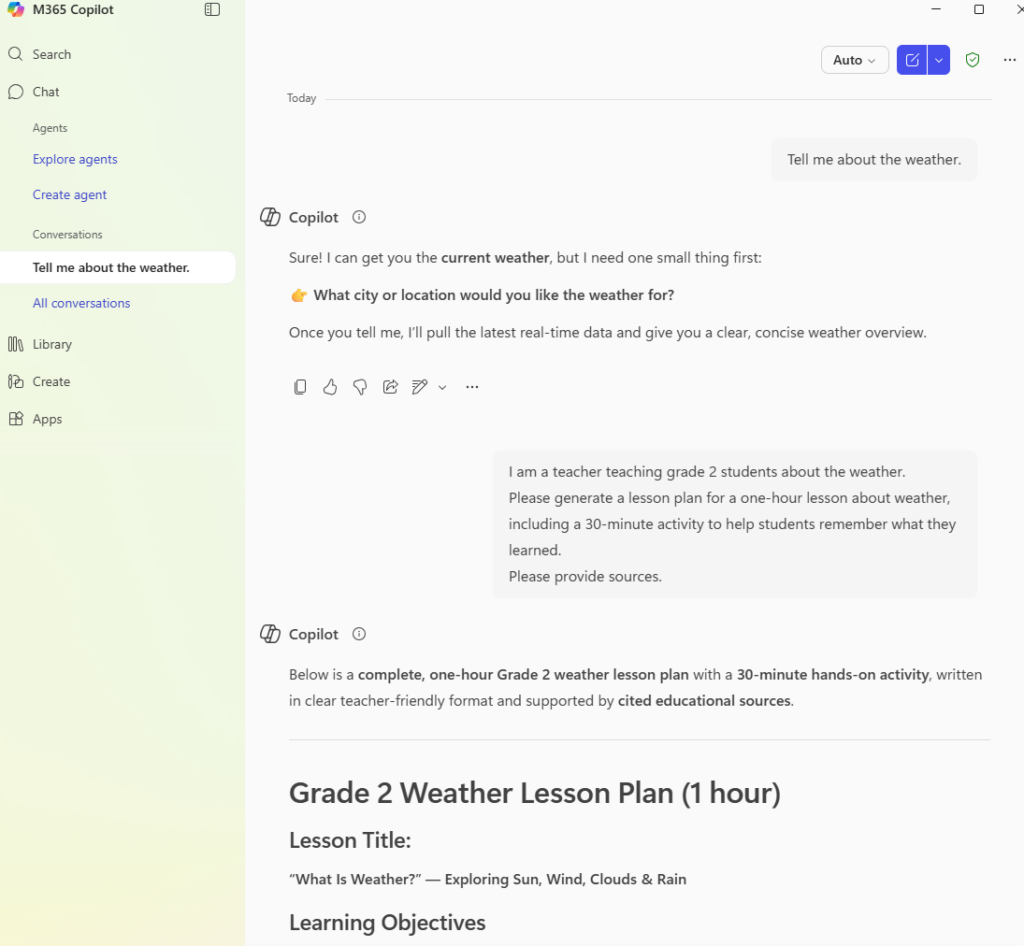

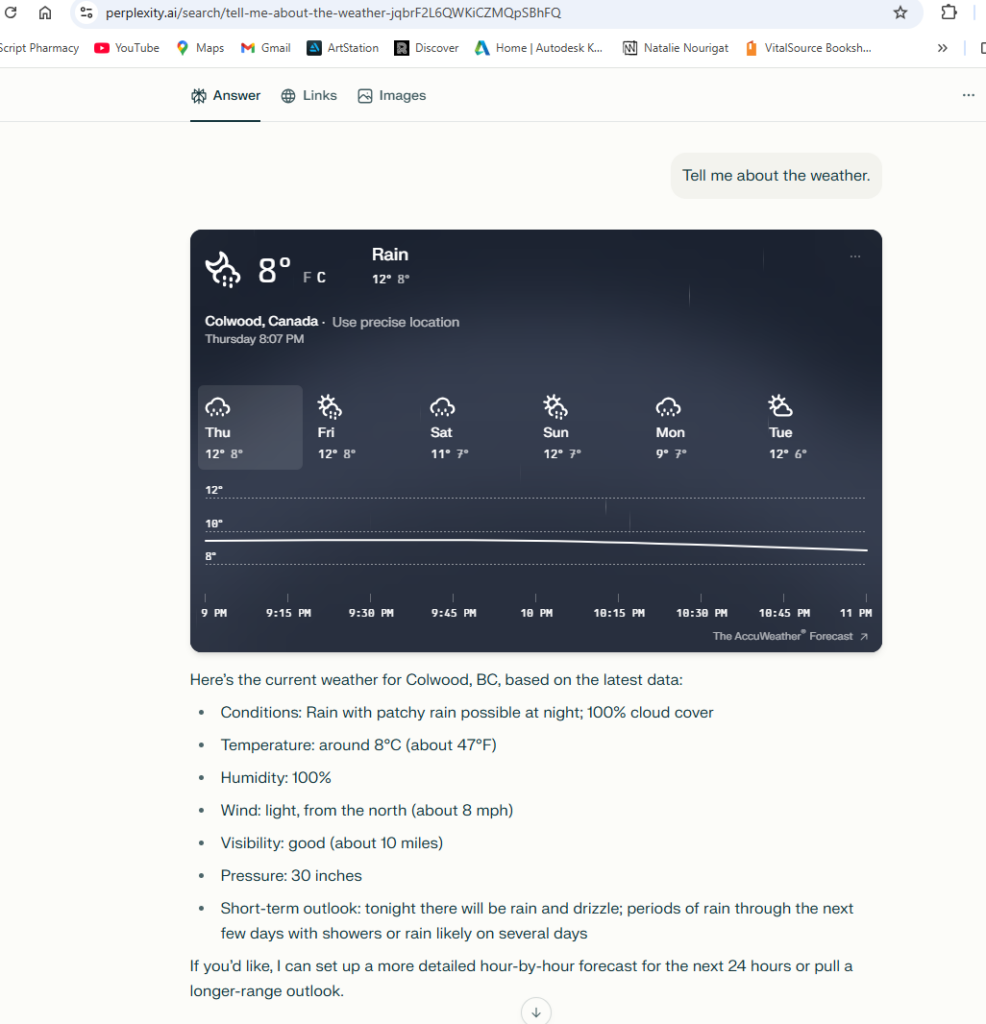

In education, AI has helped me by breaking down complex instructions and simplifying academic language, very much like what Robert Clapperton describes in his TED Talk. It has also helped me quickly scan through multiple papers to decide which ones are worth reading more closely for my research, I used chat GPT but as part of this week’s activities, I used this opportunity to try AI tools I had not used before, including Perplexity and Microsoft Copilot. I first tested a very vague prompt “Tell me about the weather.” The response from Copilot was “ What city or location would you like the weather for?” while perplexity, assuming had access to my location came up with detailed weather report of my area. I played with them copy and pasted the prompts given by our prof, and noticed they are pretty similar when the prompts are solid. This reinforced for me that no AI tool should be trusted automatically. Comparing tools and questioning outputs is an essential part of AI literacy.

Through these exercises, I learned very quickly that AI cannot reliably produce deep academic analysis, accurate scholarly connections, or proper citations , and I actually appreciate that limitation. Knowing what AI cannot do has pushed me to improve those skills myself. Instead of feeling overwhelmed, freezing, or quitting, I now have a tool that helps me enter the learning process. Interestingly, recognizing AI’s imperfections makes the learning process feel more human and collaborative. The result is that I put more effort into learning, not less.

One downside is that I rely on people less , which might be good news for my sister, who has been my tutor for most of my life. As someone who has both been tutored and tutored others, this makes me think carefully about balance and connection in learning.

As a future teacher, these experiences strongly shape how I think about AI in the classroom. I would allow students to use AI in areas where it supports learning rather than replaces it. For students like me, who benefit from quick clarification, visual mapping, or reduced anxiety, AI can act like an educational assistant , not a friend, not the teacher, and not the thinker, but a helper. My role would be to explicitly teach students how to use AI in ways that build confidence and curiosity, rather than dependence or frustration.

At the same time, I am cautious. I am not naturally enthusiastic about learning new technologies unless I truly need them, and with AI, that caution feels justified. Environmental impact, human rights concerns, and political issues cannot be ignored. We have seen before how easily we give in to technologies like cars, computers, and smartphones without fully reflecting on their long-term consequences. Overwhelm and overload feel like defining features of modern life, and AI has the potential to add to that.

I also noticed something important about how we judge information depending on where it comes from. When we are listening to a person in front of us, it often feels easier to question them or take their words with a grain of salt. When we read something or watch a recorded talk, we tend to trust it more. With AI, the risk is even greater , there is a tendency to accept its output as neutral, complete, or authoritative. AI does not have access to all peer reviewed, fact checked, or scholarly knowledge, especially in the human and social sciences. In these areas, AI can be misleading if treated as an objective source of truth. AI is not neutral; what it shows and what it hides are shaped by human decisions about data and algorithms.

This leads me to broader questions about access to knowledge and the freedom of science. If academic research were more openly accessible , with proper credit and ethical use , learners would be better equipped to engage directly with reliable sources, rather than relying on filtered or simplified information found online. Without that openness, AI risks reinforcing existing power structures instead of supporting critical thinking and genuine learning.

For me, AI ultimately comes down to intention and practice. Intention shapes how we use it, and practice shapes who we become. As a teacher, AI constantly reminds me to reflect on why I am here, where I am heading, and what truly matters in education. That kind of reflection cannot be automated. It comes from being present, being human, and practicing responsibility again and again.